PDigit's AI PORTFOLIO

Blending AI efficiency with human interpretation

WHY LLM FINE TUNING and is it for you?

What is Fine-Tuning?

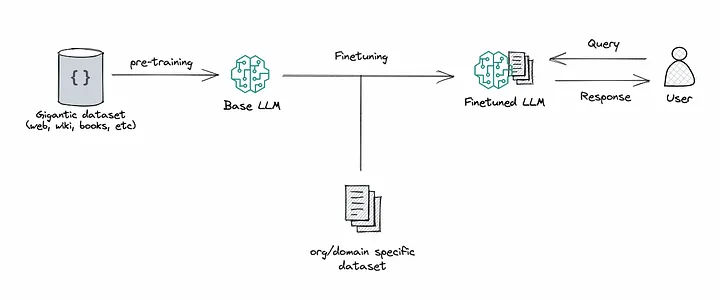

In the rapidly evolving world of artificial intelligence, Large Language Models (LLMs) like GPT have emerged as pivotal tools in processing and understanding human language. These sophisticated models, trained on vast datasets, are capable of performing a wide array of language-related tasks, from translation to content creation (GAI). However, the true potential of these models is unlocked through a process known as fine-tuning.

Img Ref https://miro.medium.com/v2/resize:fit:828/format:webp/0*5aZDn1DACKjcANXu.gif

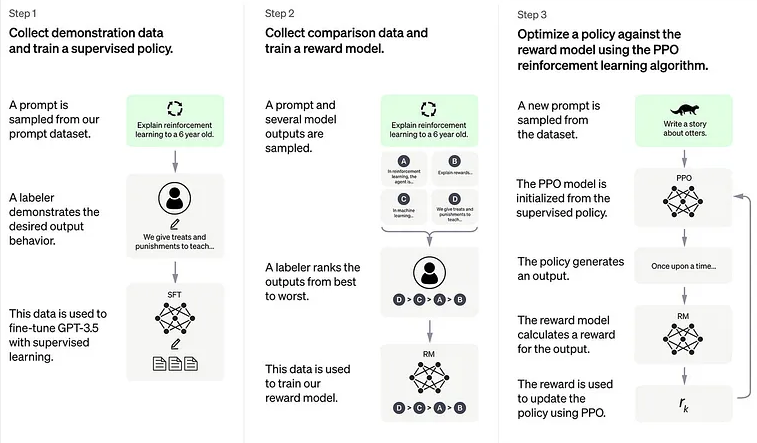

Fine-tuning is used to refine pre-trained models to deliver better performance on specific tasks by training them on a more carefully labeled dataset that is closely related to the task at hand. It enables models to adapt to niche domains, such as customer support, medical research, legal analysis, etc. The power of fine-tuning depends on the additional data and training.

Additional datasets refer to new raw information, and training is often coupled with a feedback or rating system that evaluates the outputs produced by the model, and guides it to better results.

Fine-tuning an AI model incurs additional costs, but it is often much more cost-effective in the long run compared to training a language model from scratch.

Increased Accuracy and Relevance

One of the most significant advantages of fine-tuning LLMs is the substantial increase in accuracy and relevance of their outputs. Unlike their generic counterparts, fine-tuned models are tailored to specific domains, industries, or even individual user preferences, enabling them to generate more precise and applicable responses. For instance, a model trained on general language data might produce generic responses to medical inquiries. In contrast, a fine-tuned model, specifically trained on medical literature and case studies, can provide more accurate, informed, and contextually relevant information. This heightened accuracy is not just a matter of convenience but is critical in fields where precision of information is paramount.

Furthermore, fine-tuning allows these models to grasp and mirror specific linguistic styles and terminologies unique to certain fields, making their interaction more intuitive and effective for professionals within those domains.

As mentioned in the introduction, closed-source LLMs, such as ChatGPT or Bard, can and have changed continuously without notice or choice. This also causes non-deterministic prompt results (as new training, filters, or policy changes can decide for you).

Fine-tuned models have proven to overcome the issues of ‘replication’ of outputs, given the same prompt. This is a major known issue of general LLMs like ChatGPT, Bard, etc. (also due to their being closed-source and continuous changes often “without notice”).

Efficiency and Cost-Effectiveness

Another key advantage of fine-tuning LLMs lies in their enhanced efficiency and cost-effectiveness. When a model is fine-tuned, it requires significantly less computational power and resources compared to training a model from scratch or operating a more general model.

This efficiency is particularly evident in the model’s faster response times and lower processing requirements, which are crucial for real-time applications. Moreover, fine-tuning can be a more economical approach, especially for businesses and organizations looking to implement AI solutions without the hefty investment associated with training large models.

By focusing on specific datasets and objectives, fine-tuning ensures that resources are utilized in the most effective manner, yielding higher returns on investment and making advanced AI technologies more accessible to a broader range of users.

Adaptability and Customization

The adaptability and customization offered by fine-tuning LLMs stand out as another significant benefit. Fine-tuned models are not just limited to understanding specific jargon or topics; they can be tailored to understand the nuances of regional dialects, cultural contexts, and even individual speech patterns. This level of customization allows for a more personalized user experience, making these models incredibly versatile tools for diverse applications, from customer service chatbots that can understand and mimic local colloquialisms to personalized educational assistants that adapt to the learning style and pace of the student. The ability to adapt and customize these models means they can continuously evolve and improve, aligning closely with the changing needs and preferences of their users.

Conclusion of Why Fine Tuning and is it for you?

In conclusion, the fine-tuning of Large Language Models like GPT presents a myriad of advantages that significantly enhance their application and effectiveness. By improving the accuracy and relevance of the outputs, increasing efficiency and cost-effectiveness, and offering unparalleled adaptability and customization, fine-tuning makes these already powerful models even more potent and accessible tools. As the field of artificial intelligence continues to advance, the strategic importance of fine-tuning in unlocking the full potential of LLMs becomes increasingly evident, paving the way for more innovative and tailored AI solutions in various sectors. The ongoing development in this area promises exciting possibilities for the future, where AI can be more seamlessly integrated into every aspect of our digital lives.

References (external links):

- Choosing between public and private LLMs

- LLM Fine-tuning LLama2 with hands-on example

- Report from Microsoft AI Reveals the Impact of Fine-Tuning and Retrieval-Augmented Generation RAG on Large Language Models in Agriculture: Microsoft AI Report on Fine-Tuning and RAG in Agriculture